S3 Creating Directory to Put Each Uploaded File Into

Trouble Statement

Scenario

A user wants to run a information-processing (e.1000. ML) workload on an EC2 instance. The data to be processed in this workload is a CSV file stored in an S3 bucket.

Currently the user has to manually spin upwardly an EC2 example (with a user-data script that installs the tools and starts the data processing), after uploading the data (a CSV file) to their S3 saucepan.

Wouldn't it be slap-up if this could be automated? So that creation of a file in the S3 saucepan (from any source) would automatically spin up an appropriate EC2 case to process the file? And cosmos of an output file in the same bucket would likewise automatically finish all relevant (east.thou. tagged) EC2 instances?

Requirements

Apply Case 1

When a CSV is uploaded to the inputs "directory" in a given S3 bucket, an EC2 "data-processing" instance (i.east. tagged with {"Purpose": "data-processing"}) should be launched, but but if a "data-processing" tagged instance isn't already running. One instance at a time is sufficient for processing workloads.

Employ Case ii

When a new file is created in the outputs "directory" in the aforementioned S3 saucepan, it means the workload has finished processing, and all "data-processing"-tagged instances should now be identified and terminated.

Solution Using S3 Triggers and Lambda

Every bit explained at Configuring Amazon S3 Outcome Notifications

, S3 tin can transport notifications upon:

- object cosmos

- object removal

- object restore

- replication events

- RRS failures

S3 tin can publish these events to 3 possible destinations:

- SNS

- SQS

- Lambda

Nosotros desire S3 to button object creation notifications directly to a Lambda part, which will have the necessary logic to process these events and determine further actions.

Hither's how to do this using Python3 and boto3 in a uncomplicated Lambda function.

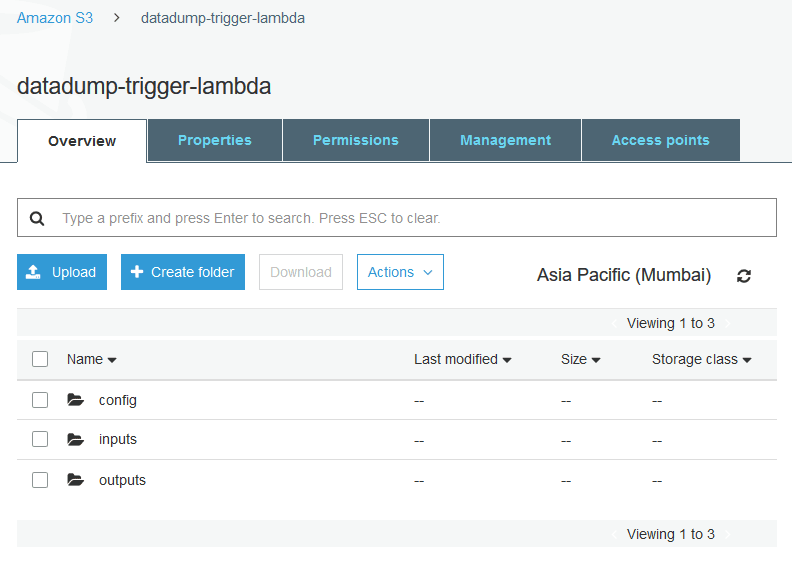

AWS Console: Setup the S3 saucepan

Go to the AWS S3 console. Go to the bucket yous want to use (or create a new one with default settings) for triggering your Lambda function.

Create three "folders" in this bucket ('folders/directories' in S3 are actually 'key-prefixes' or paths) like so:

What are these dirs for?

-

config: to hold diverse settings for the Lambda function -

inputs: a CSV file uploaded here will trigger the ML workload on an EC2 instance -

outputs: whatever file here indicates completion of the ML workload and should crusade any running data-processing (i.e. specially-tagged) EC2 instances to finish

The config binder

We demand to supply the EC2 launch information to the Lambda part. By "launch data" we mean, the instance-blazon, the AMI-Id, the security groups, tags, ssh keypair, user-data, etc.

One manner to do this is to hard-code all of this into the Lambda code itself, merely this is never a good idea. It'south always improve to externalize the config, and 1 way to do this by storing it in the S3 bucket itself like so:

Save this as ec2-launch-config.json in the config folder:

{ "ami" : "ami-0123b531fc646552f" , "region" : "ap-southward-1" , "instance_type" : "t2.nano" , "ssh_key_name" : "ssh-connect" , "security_group_ids" : [ "sg-08b6b31110601e924" ], "filter_tag_key" : "Purpose" , "filter_tag_value" : "information-processing" , "set_new_instance_tags" : [ { "Fundamental" : "Purpose" , "Value" : "information-processing" }, { "Key" : "Name" , "Value" : "ML-runner" } ] }

The params are quite self-explanatory, and you lot can tweak them as you need to.

"filter_tag_key": "Purpose" : "filter_tag_value": "data-processing" --> this is the tag that volition be used to identify (i.e. filter) already-running data-processing EC2 instances.

You'll notice that user-information isn't part of the above JSON config. It'south read in from a dissever file called user-data, just and so that information technology'due south easier to write and maintain:

#!/bin/bash apt-get update -y apt-go install -y apache2 systemctl first apache2 systemctl enable apache2.service echo "Congrats, your setup is a success!" > /var/www/html/alphabetize.html

The above user-data script will install the Apache2 webserver, and writes a congratulatory message that will be served on the example's public IP address.

Lambda function logic

The Lambda function needs to:

- receive an incoming S3 object creation notification JSON object

- parse out the S3 bucket name and S3 object key name from the JSON

- pull in a JSON-based EC2 launch configuration previously stored in S3

- if the S3 object key (i.e. "directory") matches

^inputs/, cheque if nosotros demand to launch a new EC2 example and if and so, launch one - if the S3 object fundamental (i.e. "directory") matches

^outputs/, cease any running tagged instances

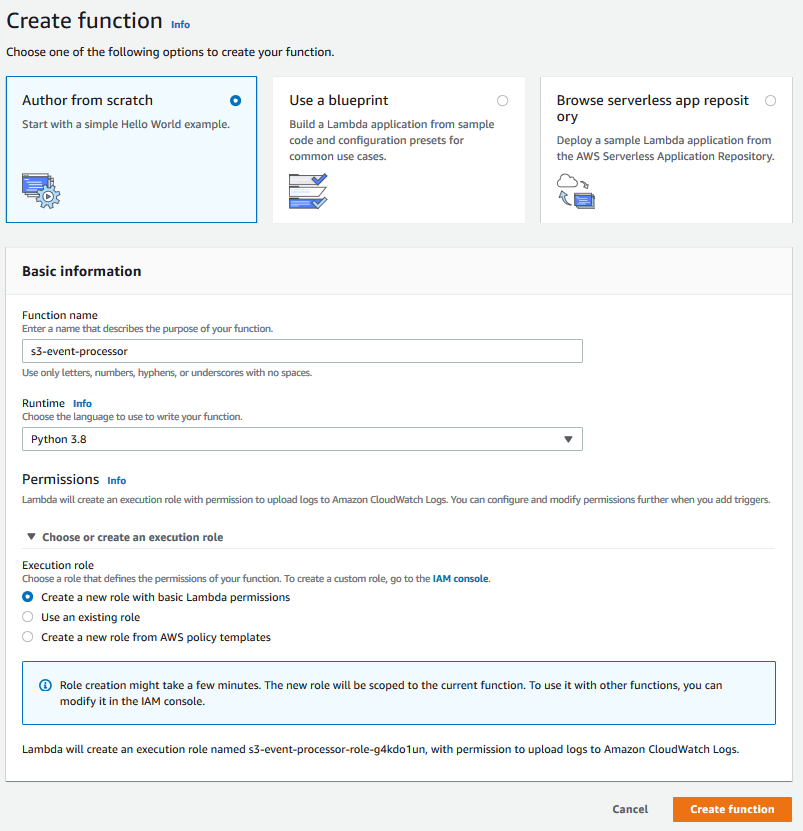

AWS Panel: New Lambda function setup

Go to the AWS Lambda console and click the Create function button.

Select Author from scratch, enter a function name, and select Python 3.8 as the Runtime.

Select Create a new role with basic Lambda permissions in the Choose or create an execution role dropdown.

Click the Create office push button.

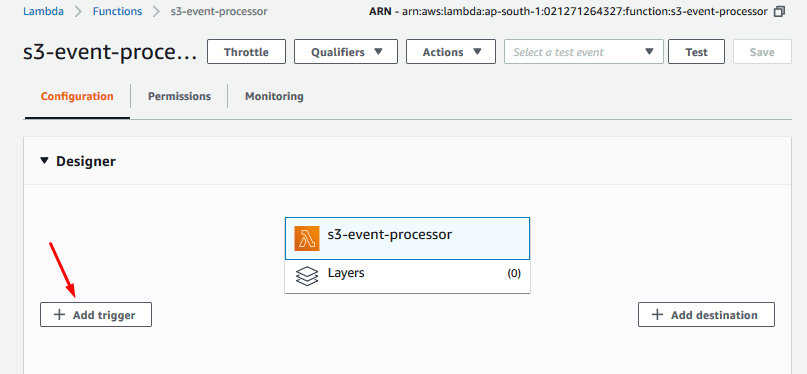

This will have you to the Configuration tab.

Click the Add together Trigger push:

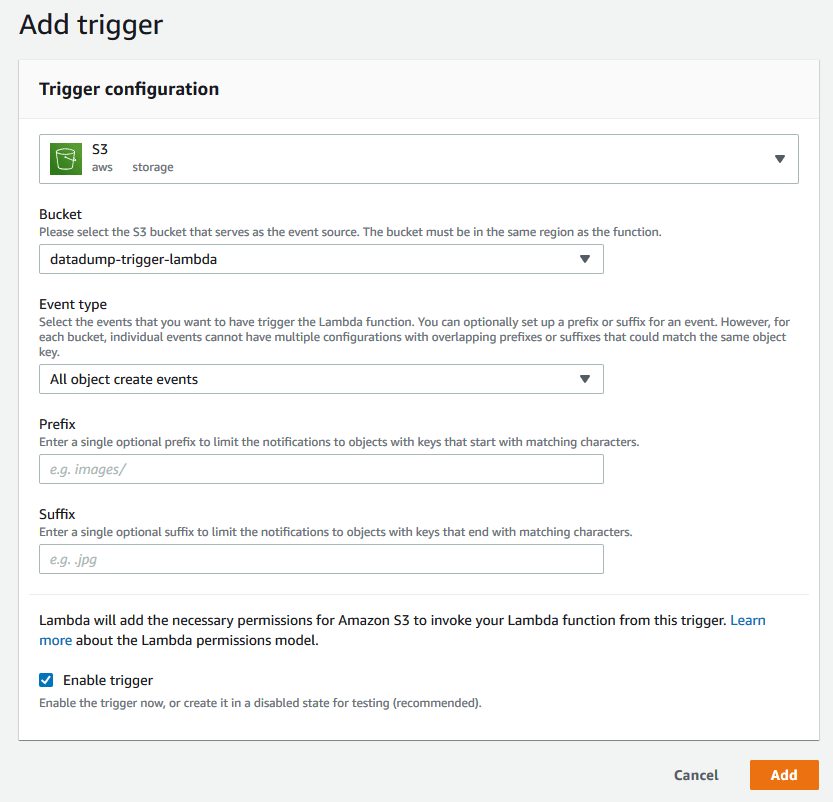

Setup an S3 trigger from the bucket in question, like so:

- Select your bucket

- Select the events you're interested in (

All object create eventsin this case) - Leave

PrefixandSuffixempty, as we volition take intendance of prefixes (inputsandoutputsbucket paths) in our function - Select

Enable trigger

As it says near the lesser of the screenshot:

Lambda will add the necessary permissions for Amazon S3 to invoke your Lambda function from this trigger.

So we don't need to go into S3 to configure notifications separately.

- Click the

Addbutton to save this trigger configuration.

Back on the main Lambda designer tab, you'll discover that S3 is at present linked upwardly to the our newly-created Lambda office:

Click the name of the Lambda function to open upwards the Role code editor underneath the Designer pane.

Lambda function lawmaking

Copy the following Lambda role code into the Function code editor, then click the Save push button on the tiptop-right.

import boto3 import json import base64 from urllib.parse import unquote_plus BUCKET_NAME = "YOUR_S3_BUCKET_NAME" CONFIG_FILE_KEY = "config/ec2-launch-config.json" USER_DATA_FILE_KEY = "config/user-data" BUCKET_INPUT_DIR = "inputs" BUCKET_OUTPUT_DIR = "outputs" def launch_instance ( EC2 , config , user_data ): tag_specs = [{}] tag_specs [ 0 ][ 'ResourceType' ] = 'case' tag_specs [ 0 ][ 'Tags' ] = config [ 'set_new_instance_tags' ] ec2_response = EC2 . run_instances ( ImageId = config [ 'ami' ], # ami-0123b531fc646552f InstanceType = config [ 'instance_type' ], # t2.nano KeyName = config [ 'ssh_key_name' ], # ambar-default MinCount = 1 , MaxCount = 1 , SecurityGroupIds = config [ 'security_group_ids' ], # sg-08b6b31110601e924 TagSpecifications = tag_specs , # UserData=base64.b64encode(user_data).decode("ascii") UserData = user_data ) new_instance_resp = ec2_response [ 'Instances' ][ 0 ] instance_id = new_instance_resp [ 'InstanceId' ] # print(f"[DEBUG] Total ec2 instance response data for '{instance_id}': {new_instance_resp}") return ( instance_id , new_instance_resp ) def lambda_handler ( raw_event , context ): print ( f "Received raw event: { raw_event } " ) # outcome = raw_event['Records'] for record in raw_event [ 'Records' ]: bucket = record [ 's3' ][ 'bucket' ][ 'name' ] print ( f "Triggering S3 Bucket: { bucket } " ) key = unquote_plus ( tape [ 's3' ][ 'object' ][ 'central' ]) impress ( f "Triggering cardinal in S3: { key } " ) # go config from config file stored in S3 S3 = boto3 . customer ( 's3' ) upshot = S3 . get_object ( Bucket = BUCKET_NAME , Key = CONFIG_FILE_KEY ) ec2_config = json . loads ( effect [ "Body" ]. read (). decode ()) print ( f "Config from S3: { ec2_config } " ) ec2_filters = [ { 'Name' : f "tag: { ec2_config [ 'filter_tag_key' ] } " , 'Values' :[ ec2_config [ 'filter_tag_value' ] ] } ] EC2 = boto3 . client ( 'ec2' , region_name = ec2_config [ 'region' ]) # launch new EC2 instance if necessary if bucket == BUCKET_NAME and cardinal . startswith ( f " { BUCKET_INPUT_DIR } /" ): print ( "[INFO] Describing EC2 instances with target tags..." ) resp = EC2 . describe_instances ( Filters = ec2_filters ) # print(f"[DEBUG] describe_instances response: {resp}") if resp [ "Reservations" ] is not []: # at to the lowest degree one instance with target tags was found for reservation in resp [ "Reservations" ] : for example in reservation [ "Instances" ]: print ( f "[INFO] Establish ' { instance [ 'State' ][ 'Name' ] } ' instance ' { example [ 'InstanceId' ] } '" f " having target tags: { instance [ 'Tags' ] } " ) if instance [ 'Country' ][ 'Code' ] == 16 : # instance has target tags AND also is in running state impress ( f "[INFO] instance ' { example [ 'InstanceId' ] } ' is already running: so not launching any more instances" ) return { "newInstanceLaunched" : Simulated , "onetime-instanceId" : instance [ 'InstanceId' ], "new-instanceId" : "" } impress ( "[INFO] Could not find fifty-fifty a single running case matching the desired tag, launching a new one" ) # retrieve EC2 user-information for launch result = S3 . get_object ( Bucket = BUCKET_NAME , Key = USER_DATA_FILE_KEY ) user_data = result [ "Trunk" ]. read () impress ( f "UserData from S3: { user_data } " ) event = launch_instance ( EC2 , ec2_config , user_data ) print ( f "[INFO] LAUNCHED EC2 instance-id ' { event [ 0 ] } '" ) # print(f"[DEBUG] EC2 launch_resp:\n {result[ane]}") return { "newInstanceLaunched" : True , "old-instanceId" : "" , "new-instanceId" : consequence [ 0 ] } # terminate all tagged EC2 instances if saucepan == BUCKET_NAME and key . startswith ( f " { BUCKET_OUTPUT_DIR } /" ): impress ( "[INFO] Describing EC2 instances with target tags..." ) resp = EC2 . describe_instances ( Filters = ec2_filters ) # print(f"[DEBUG] describe_instances response: {resp}") terminated_instance_ids = [] if resp [ "Reservations" ] is not []: # at least 1 instance with target tags was found for reservation in resp [ "Reservations" ] : for instance in reservation [ "Instances" ]: print ( f "[INFO] Plant ' { instance [ 'State' ][ 'Name' ] } ' case ' { instance [ 'InstanceId' ] } '" f " having target tags: { instance [ 'Tags' ] } " ) if instance [ 'Country' ][ 'Code' ] == 16 : # example has target tags AND also is in running land print ( f "[INFO] instance ' { instance [ 'InstanceId' ] } ' is running: terminating it" ) terminated_instance_ids . append ( instance [ 'InstanceId' ]) boto3 . resource ( 'ec2' ). Case ( example [ 'InstanceId' ]). terminate () return { "terminated-instance-ids:" : terminated_instance_ids }

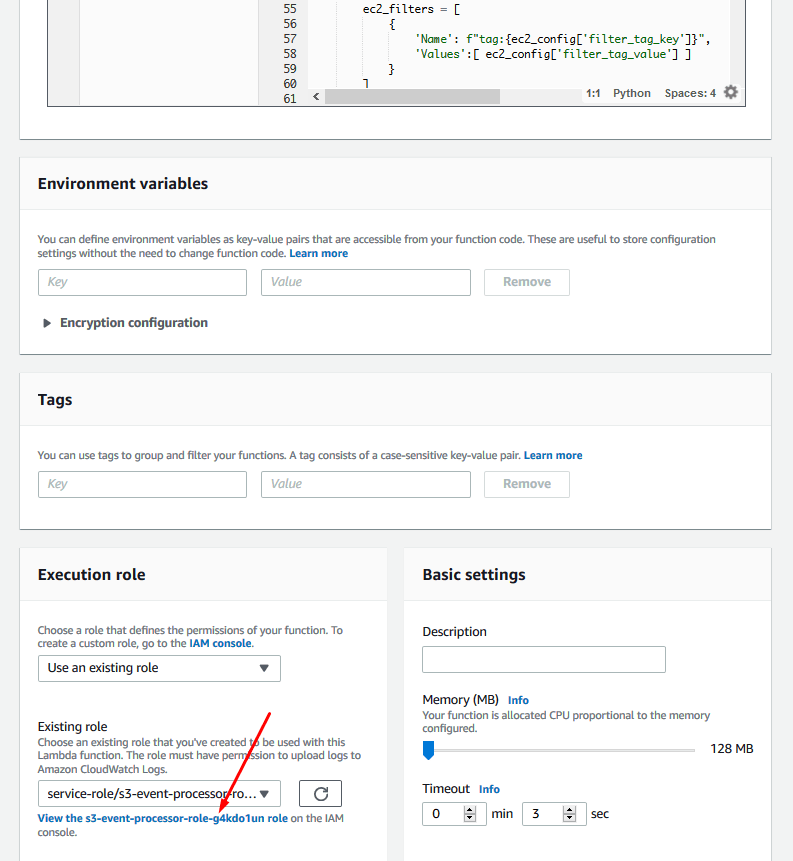

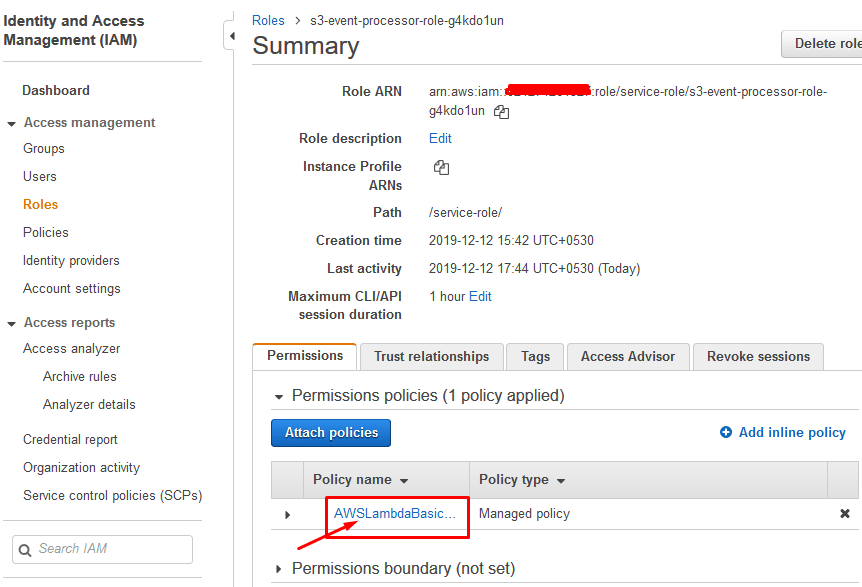

Lambda Execution IAM Role

Our lambda function won't work just yet. It needs S3 access to read in the config and it needs permissions to describe, launch, and stop EC2 instances.

Ringlet down on the Lambda configuration page to the Execution function and click the View role link:

Click on the AWSLambdaBasic... link to edit the lambda function's policy:

Click {} JSON, Edit Policy and then JSON.

Now add the following JSON to the existing Argument Department

{ "Effect" : "Allow" , "Action" : [ "s3:*" ], "Resource" : "*" } , { "Action" : [ "ec2:RunInstances" , "ec2:CreateTags" , "ec2:ReportInstanceStatus" , "ec2:DescribeInstanceStatus" , "ec2:DescribeInstances" , "ec2:TerminateInstances" ], "Effect" : "Let" , "Resource" : "*" }

Click Review Policy and Salve changes.

Go!

Our setup's finally complete. We're ready to test it out!

Test Case i: new example launch

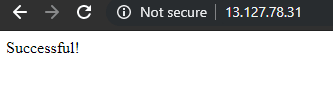

Upload a file from the S3 console into the inputs folder. If you used the verbal same config as above, this would have triggered the Lambda part, which in turn would have launched a new t2.nano case with the Purpose: information-processing tag on it.

If yous put the instance'due south public IP address into a browser (subsequently giving it a minute or so to kick and warm up), y'all should as well run into the examination message served to you: which indicates that the user-data did indeed execute successfully upon boot.

Exam Instance ii: another instance should not launch

As long as there is at least ane Purpose: data-processing tagged case running, another one should not spawn. Allow'due south upload another file to the inputs folder. And indeed the bodily beliefs matches the expectation.

If we kill the already-running case, and then upload some other file to the inputs folder, information technology volition launch a new instance.

Examination Case 3: instance termination condition

Upload a file into the outputs binder. This will trigger the Lambda function into terminating any already-running instances that are tagged with Purpose: data-processing.

Bonus: S3 issue object to test your lambda office

S3-object-creation-notification (to examination Lambda function)

{ "Records" : [ { "eventVersion" : "ii.1" , "eventSource" : "aws:s3" , "awsRegion" : "ap-south-1" , "eventTime" : "2019-09-03T19:37:27.192Z" , "eventName" : "ObjectCreated:Put" , "userIdentity" : { "principalId" : "AWS:AIDAINPONIXQXHT3IKHL2" }, "requestParameters" : { "sourceIPAddress" : "205.255.255.255" }, "responseElements" : { "x-amz-request-id" : "D82B88E5F771F645" , "x-amz-id-ii" : "vlR7PnpV2Ce81l0PRw6jlUpck7Jo5ZsQjryTjKlc5aLWGVHPZLj5NeC6qMa0emYBDXOo6QBU0Wo=" }, "s3" : { "s3SchemaVersion" : "one.0" , "configurationId" : "828aa6fc-f7b5-4305-8584-487c791949c1" , "bucket" : { "proper name" : "YOUR_S3_BUCKET_NAME" , "ownerIdentity" : { "principalId" : "A3I5XTEXAMAI3E" }, "arn" : "arn:aws:s3:::lambda-artifacts-deafc19498e3f2df" }, "object" : { "key" : "b21b84d653bb07b05b1e6b33684dc11b" , "size" : 1305107 , "eTag" : "b21b84d653bb07b05b1e6b33684dc11b" , "sequencer" : "0C0F6F405D6ED209E1" } } } ] }

That's all, folks!

Source: https://dev.to/nonbeing/upload-file-to-s3-launch-ec2-instance-7m9

0 Response to "S3 Creating Directory to Put Each Uploaded File Into"

Publicar un comentario